DeepPCD

DeepPCD: Enabling AutoCompletion of Indoor Point Clouds with Deep Learning

Pingping Cai, Sanjib Sur Computer Science and Engineering University of South Carolina

DeepPCD is the first learning based system that facilitates the reconstruction of a large indoor PCD.

Overview

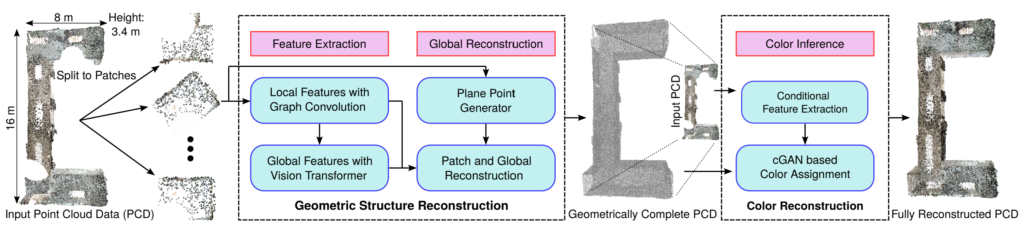

3D Point Cloud Data (PCD) is an efficient machine representation for surrounding environments and has been used in many applications. But the measured PCD is often incomplete and sparse due to the sensor occlusion and poor lighting conditions. To automatically reconstruct complete PCD from the incomplete ones, we propose DeepPCD, a deep-learning-based system that reconstructs both geometric and color information for large indoor environments. For geometric reconstruction, DeepPCD uses a novel patch based technique that splits the PCD into multiple parts, approximates, extends, and independently reconstructs the parts by 3D planes, and then merges and refines them. For color reconstruction, DeepPCD uses a conditional Generative Adversarial Network to infer the missing color of the geometrically reconstructed PCD by using the color feature extracted from incomplete color PCD. We experimentally evaluate DeepPCD with several real PCD collected from large, diverse indoor environments and explore the feasibility of PCD autocompletion in various ubiquitous sensing applications.

Publications

- DeepPCD: Enabling AutoCompletion of Indoor Point Clouds with Deep Learning

Pingping Cai, Sanjib Sur

IMWUT'22 Proceedings of the ACM on Interactive, Mobile, Wearable and Ubiquitous Technologies, Atlanta, USA, September 2022. [Paper] [Slides] [Talk] - AutoPCD: Learning-Augmented Indoor Point Cloud Completion

Pingping Cai, Edward M Sitar, Sanjib Sur

UbiComp-ISWC'21 ACM International Joint Conference on Pervasive and Ubiquitous Computing and ACM International Symposium on Wearable Computers, Virtual, September 2021. [Paper] [Poster]